Charity wants AI summit to address child sexual abuse imagery

When the UK hosts the first global summit on AI safety this autumn, a leading children’s charity is urging Prime Minister Rishi Sunak to address AI-generated images of child sexual abuse.

Internet Watch Foundation (IWF) removes abuse content from the web and reports the rise of artificial intelligence (AI) images.

AI images were first logged by the IWF last month.

It discovered predators sharing photo-realistic galleries from around the world.

Susie Hargreaves, chief executive officer of the IWF, explained, “It is clear to us that criminals have the potential to produce unprecedented quantities of lifelike images of child sexual abuse.”

Several images of girls around five years old posing naked in sexual positions were redacted for the BBC.

Among only three charities licensed to actively search for online child abuse content, the IWF is one of the most active.

As of 30 June, analysts had investigated 29 sites, and confirmed seven pages that contained AI images, after beginning logging AI images on 24 May.

According to the charity, the images were mixed with real abuse material shared on the illegal sites, but the exact number of images was not confirmed.

Experts classify some of them as Category A images, since they depict penetration in the most graphic manner possible.

In almost every country, child sexual abuse images are illegal.

In light of this new threat, legislation must reflect this, and be fit for purpose in order to stay ahead of this emerging technology.

‘Inevitable’ automation of jobs: AI warning

What is the best way to tell if a person is real or artificial intelligence?

Earlier this year, Mr Sunak announced plans to host the first global summit on AI safety in the UK.

Through international co-ordination of action, the government promises to bring together experts and lawmakers to address the risks of AI.

IWF analysts monitor trends in abuse imagery, such as the recent rise of “self-generated” abuse content, where children are coerced into sending images or videos of themselves to predators.

While the number of discovered images is still a fraction of other types of abuse content, the charity is concerned that AI-generated imagery is on the rise.

Over 250,000 web pages containing child sexual abuse imagery were logged and attempted to be taken offline by the IWF in 2022.

Researchers also recorded predators’ conversations on forums, where they shared tips on how to create lifelike images of children.

There were guides on how to trick AI into drawing abuse images, and how to download open-source AI models.

Although most AI image generators have strict built-in rules to prevent users from generating content with banned words or phrases, open-source tools can be downloaded for free and tweaked according to the user’s preference.

It is most popular to use Stable Diffusion. The code of the system was released online by German AI academics in August 2022.

BBC News talked to an AI artist who makes sexualized images of preteen girls using stable diffusion.

According to the Japanese man, it was the first time that images of children could be made without exploiting real children since history.

Experts, however, say the images could be harmful.

In my opinion, AI-generated images will increase these predilections and reinforce these deviances, leading to increased harm and a greater risk of harm to children around the world,” said Dr Michael Bourke, a United States Marshals Service specialist in sex offenders and paedophiles.

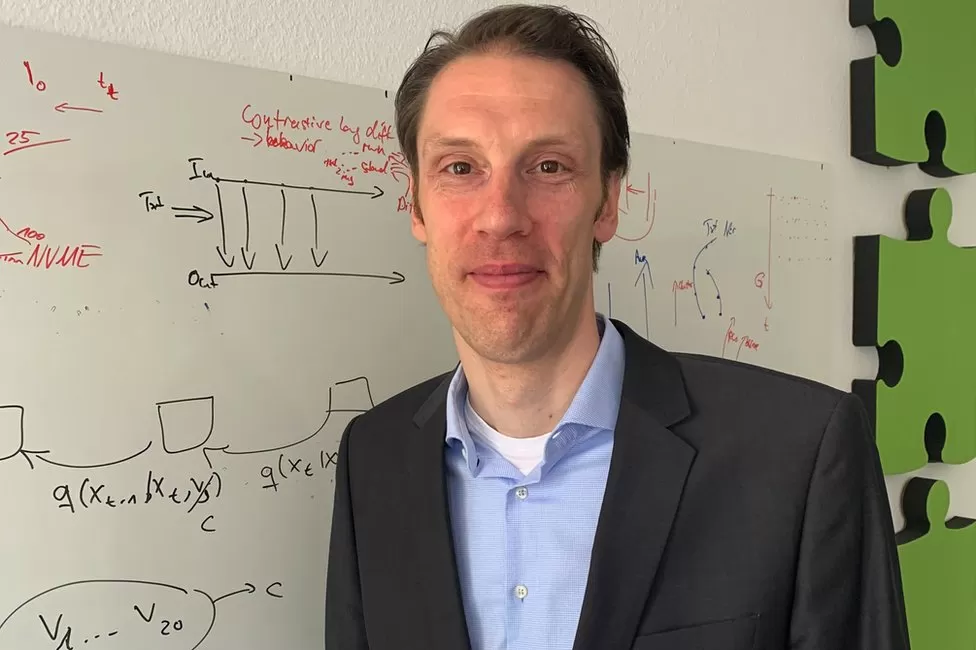

A lead developer of Stable Diffusion, Prof Bjorn Ommer, defended the decision to make it open source. It has since led to hundreds of academic research projects as well as many successful businesses, he told the BBC.

He insists that stopping research or development is not the right thing to do, pointing to this as vindication for his and his team’s decision.

In my opinion, we need to face the fact that this is a worldwide, global development. Stopping it here would not stop it from spreading globally to countries with probably non-democratic societies. In light of the rapid pace at which this development is moving, we need to figure out mitigation steps.

Among the most prominent companies building new versions of Stable Diffusion is Stability AI, which helped fund the pre-launch model. Although it declined to speak with me, it has previously said it prohibits the use of its AI for illegal or immoral purposes.